What is an Inference Provider? A European, Privacy-First Take

An inference provider is a cloud service that specializes in running pre-trained artificial intelligence models and exposing them via API. Unlike model training—which requires…

Stories, experiments, research and deep‑dives into the world of artificial intelligence

An inference provider is a cloud service that specializes in running pre-trained artificial intelligence models and exposing them via API. Unlike model training—which requires…

For Chief Technology Officers, the intersection of the EU AI Act and GDPR represents the most significant regulatory challenge of the decade. The stakes…

As the regulatory landscape tightens with the enforcement of the AI Act and stricter GDPR interpretations, selecting an AI inference provider is no longer…

As European enterprises race to adopt generative AI, the dilemma often comes down to this: build your own infrastructure or rely on an API?…

Deploying AI in enterprise environments requires moving beyond vague promises. This is the official pragmatic framework for implementing GDPR-compliant AI inference. It breaks down…

This tutorial provides a complete guide to implementing the Context Engineering framework for AI agents: the architecture presented is optimized to prevent context rot, reduce execution…

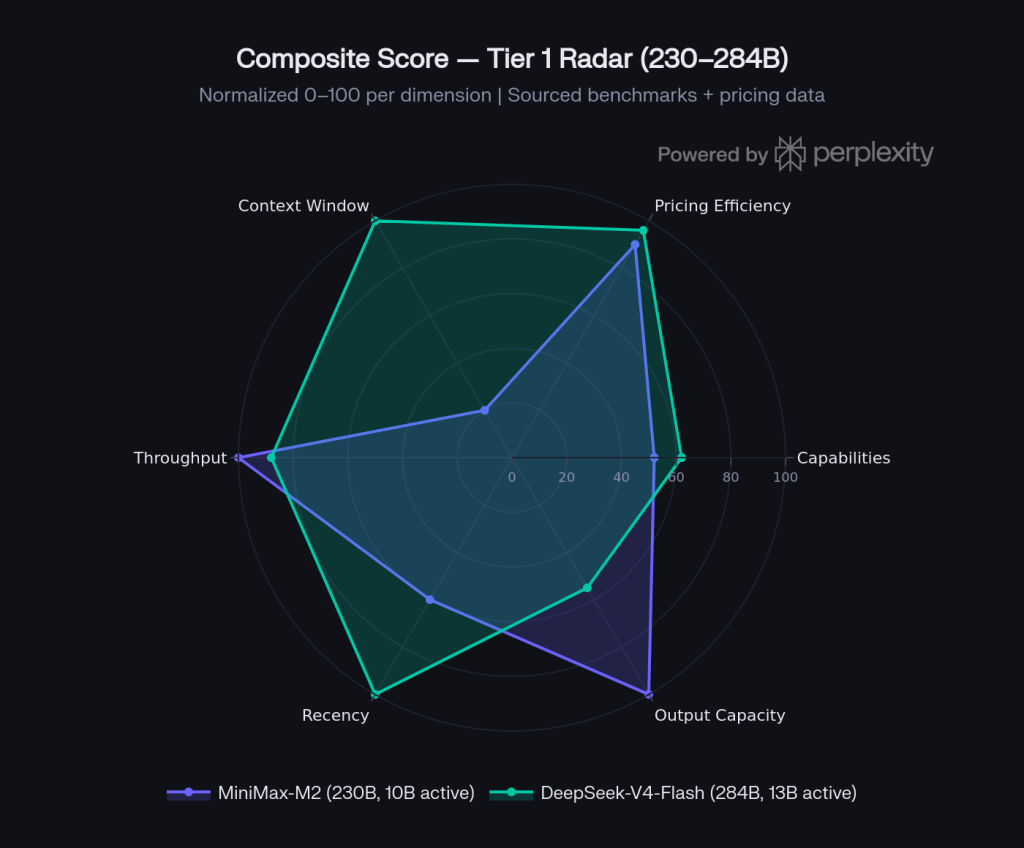

Choosing between MiniMax and DeepSeek is not a single decision — it depends on which size tier you are operating in. This article organizes…

DFlash is an effective speculative decoding algorithm designed to accelerate Large Language Model (LLM) inference without altering the core weights of your main verifier…

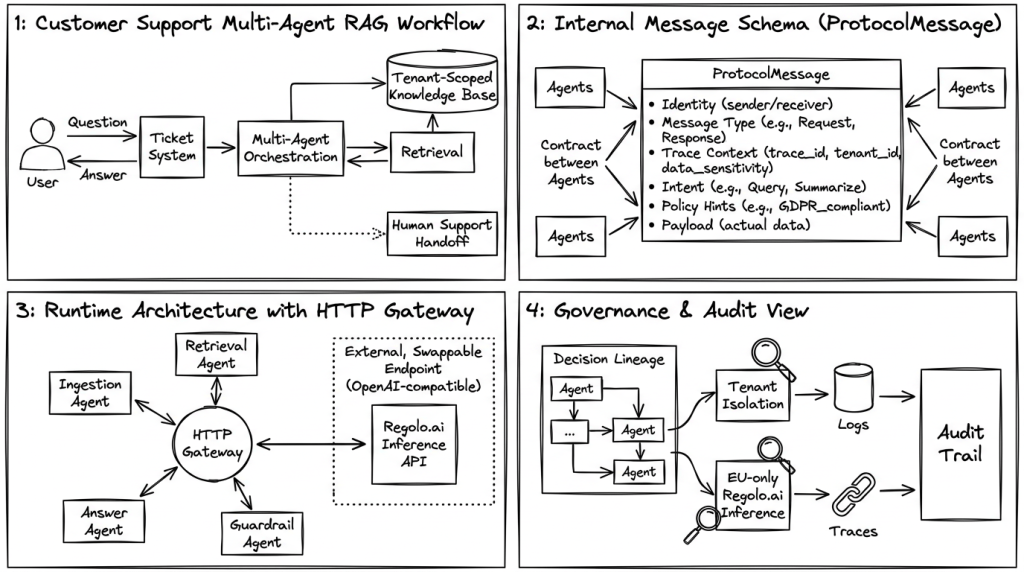

Most multi‑agent systems in production still “just pass prompts around”, which is exactly what breaks as soon as you add enterprise clients, ticket data,…

An embedding is a way of turning a piece of text into a list of numbers that captures its meaning, so a computer can…

Is your AI agent becoming unreliable? When an agent's System Prompt grows to hundreds of lines and handles dozens of tasks, it suffers from…

It's a blueprint production-ready for implementing a stateful, three-layer memory architecture for AI agents. Inspired by Anthropic's managed agent memory framework, this approach uses…