AI costs are no longer dominated by model training; for most teams, continuous inference on GPUs is the real bill. Cutting that bill means changing how we buy compute and how we use models, not just hunting for cheaper hours.

Why AI inference costs exploded

GPU prices have risen across hyperscalers, with some reports noting mid‑single‑digit to double‑digit percentage hikes in 2026 as demand outpaced supply. At the same time, product teams turned “a small pilot agent” into always‑on assistants, background workflows, and user‑facing features that call LLMs dozens of times per request.

The result is a compounding effect: more calls, heavier models, and high per‑hour GPU rates. Cost analyses show that as products scale, hidden items—idle GPUs, over‑sized models, unbatched traffic—often outweigh pure hourly pricing. Fixing this starts with understanding where each euro goes in the stack.

The three main levers for AI cost reduction

Practitioners now converge on three dominant levers: choosing the right GPU pricing model, optimizing the model itself (quantization/compression), and improving runtime utilization (batching, scheduling, and mixed GPU strategies).

From a pricing perspective, analyses of GPU‑as‑a‑service offerings show that hourly on‑demand gives maximum flexibility, but the highest per‑hour rate, while reserved/long‑term rentals can drop rates by 40–70% for sustained loads. On the technical side, quantization and optimized runtimes like vLLM or TensorRT‑LLM routinely deliver 20–40% more throughput per GPU without visible quality loss, and in some cases up to 5× reduction in per‑token cost when combined.

Hourly GPU rent vs API consumption: how to choose

The economic trade‑off between renting GPUs by the hour and paying per call through an API depends mainly on predictability and scale. Hourly or reserved GPUs are usually cheaper once utilization is high and stable (for example, a model serving millions of tokens per day), because you pay for capacity rather than for each token margin.

API‑based consumption wins when workloads are spiky, low‑volume, or still evolving, because you avoid idle time, cluster engineering, and over‑provisioning. Many cost‑efficient stacks now combine the two: they pay hourly for core inference models they know they will need, and use API calls for experiments, edge cases, or access to specialised models they would not host themselves.

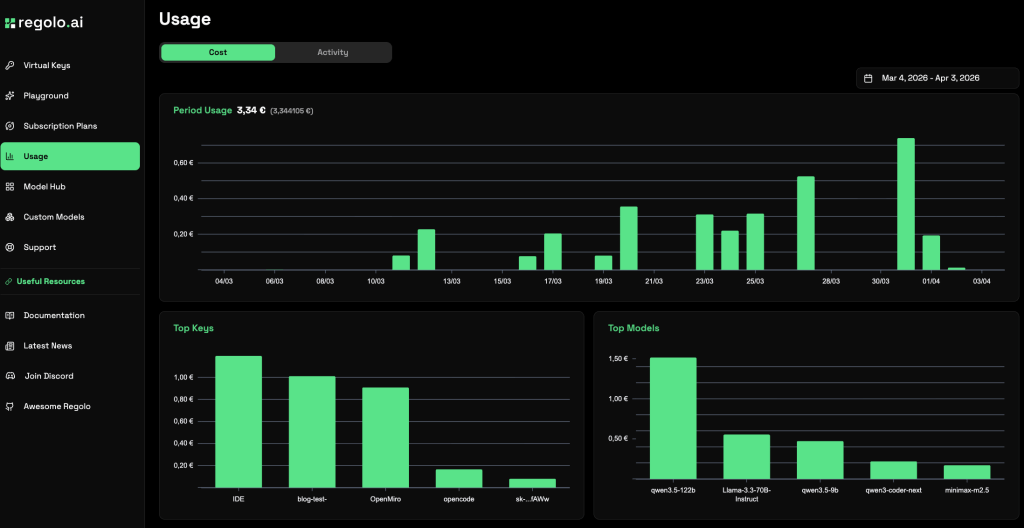

Our approaches cost control

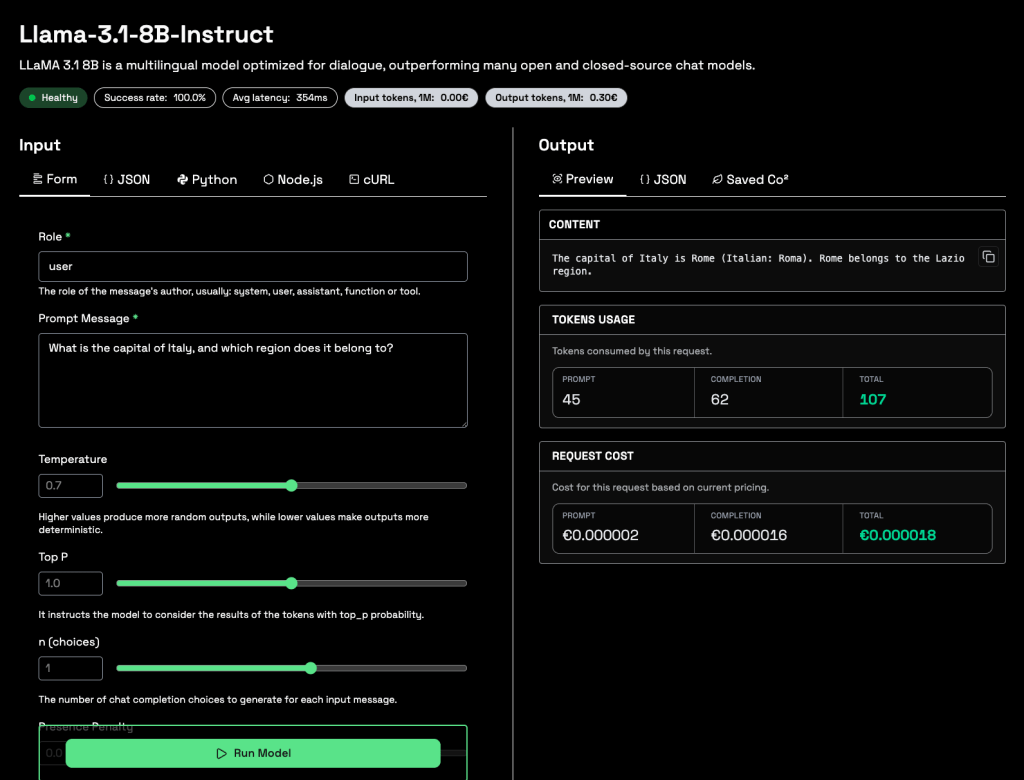

We built Regolo.ai around two simple options instead of a complex menu of ephemeral, serverless primitives: GPU hourly rent and ready‑to‑use APIs for popular models running on our Italian infrastructure, without cold starts. The idea is to let teams choose the billing style that maps to their reality, while we handle GPU orchestration, energy‑efficient data centers, and model hosting in the background.

If you know you will keep a model online for many hours, renting GPU time gives you predictable costs and the ability to pack multiple workloads onto the same cards. When you just want to hit a trending model with minimal setup, the hosted API path avoids deployment overhead but still benefits from our optimizations on quantization, batching, and hardware choice at the infrastructure layer.

Key strategies to reduce inference costs (with or without regolo.ai)

Evidence from optimization case studies points to a “full‑stack” approach: compress the model, use the right hardware, and drive utilization up.

- Right‑size the model first.

Moving from a giant frontier model to a strong 7B–14B open model, sometimes with fine‑tuning, can cut GPU memory and cost by multiples while staying within quality requirements. Approaches like FP8/INT4 quantization and sparsity consistently deliver 20–40% throughput gains and allow bigger batch sizes on the same GPU. - Exploit GPU utilization.

Modern inference engines (vLLM, Triton, TensorRT‑LLM) use continuous batching and KV‑cache tricks to keep memory bandwidth—and thus tokens per dollar—high. Metrics like Model Bandwidth Utilization (MBU) help identify when GPUs are under‑used, signalling room to pack more requests into the same hardware. - Pick the right pricing horizon.

Analyses of reserved vs on‑demand vs spot show that hybrid strategies (stable inference on reserved or long‑term rental, experiments and training on on‑demand or spot) typically cut total GPU bills by 40–60% compared to running everything on the most flexible option.

Our role is to absorb much of this complexity: we run optimized open models on cost‑efficient GPUs, in Italian data centers powered by renewable energy, and expose either a clear hourly rate or a per‑API cost depending on how you prefer to buy. That gives a simpler path to “tokens per euro” efficiency without building a full MLOps team.

Minimal Python example: cost‑aware use of our APIs

Below is a minimal example that shows how to call a Regolo.ai model in a way that supports cost control: small, targeted prompts, reuse of context, and a clear place to track token usage. Replace placeholders with values from the latest docs.

import requests

from datetime import datetime

API_KEY = "YOUR_API_KEY"

API_URL = "https://api.regolo.ai/v1/chat/completions" # Check docs

MODEL_ID = "MODEL_ID_PLACEHOLDER" # e.g. a cost-efficient open model

headers = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json",

}

def call_regolo(messages):

payload = {

"model": MODEL_ID,

"messages": messages,

# You might add temperature/top_p controls here to standardize output.

}

start = datetime.now()

resp = requests.post(API_URL, headers=headers, json=payload, timeout=30)

resp.raise_for_status()

data = resp.json()

elapsed = (datetime.now() - start).total_seconds()

content = data["choices"][0]["message"]["content"]

usage = data.get("usage", {}) # e.g. {"prompt_tokens": ..., "completion_tokens": ...}

print(f"Latency: {elapsed:.2f}s, usage: {usage}")

return content, usage

messages = [

{

"role": "system",

"content": (

"You are a concise assistant helping us reduce LLM costs. "

"Answer in under 80 words."

),

},

{

"role": "user",

"content": (

"Give 3 concrete ways to lower our AI inference bill without "

"changing model quality too much."

),

},

]

reply, usage = call_regolo(messages)

print(reply)Code language: Python (python)By tracking usage and latency, you can compare different models or prompt strategies in terms of tokens per request and time per response, then move the most cost‑effective ones to long‑running GPU rentals while keeping exploratory ones on API‑only usage.

Common mistakes in AI cost reduction

One common mistake is optimising only for per‑hour GPU price and ignoring utilization; cheap GPUs running at 20% capacity can be worse than a pricier card running near full. Another is staying on frontier‑class models for all tasks instead of segmenting: smaller open models can handle summarization, classification, and many RAG queries at a fraction of the cost.

Teams also sometimes ignore data flows: expensive LLM calls are triggered deep inside microservices without caching, prompt reuse, or batching, so a simple UX change (combining questions, delaying non‑critical calls) can save more than infrastructure tweaks. The most mature stacks treat AI costs like any other performance budget, with dashboards, alerts, and regular reviews to decide when to shift workloads between API, hourly GPUs, and possibly on‑prem.

FAQ

Is renting GPUs hourly cheaper than using only APIs?

It can be, once your traffic is steady and high enough to keep GPUs busy; for low or spiky usage, API access is often more economical.

What is the single biggest technical lever to cut inference costs?

Quantization plus an optimized runtime (for example FP8/INT4 + vLLM‑style batching) often delivers 2–5× better tokens‑per‑dollar in real deployments.

How does Regolo.ai help reduce AI costs?

We host optimized open models on efficient GPUs in Italian data centers, offering either hourly rent or API access, so you can match costs to usage without managing GPU infra yourself.

Do I need a separate training cluster to save money?

Not necessarily. Many teams now outsource heavy training or fine‑tuning and focus on making inference cheaper via smaller models, quantization, and right‑sized GPUs.

Can I reach near “local” costs while using Regolo.ai APIs?

For many workloads, yes: by picking cost‑efficient models, trimming prompts, and leveraging our optimized inference stack on EU GPUs, you can get close to the economics of well‑run self‑hosting without owning hardware

🚀 Start your free 30-day trial at regolo.ai and deploy LLMs with complete privacy by design.

👉 Talk with our Engineers or Start your 30 days free →

- Discord – Share your thoughts

- GitHub Repo – Code of blog articles ready to start

- Follow Us on X @regolo_ai

- Open discussion on our Subreddit Community

Built with ❤️ by the Regolo team. Questions? regolo.ai/contact or chat with us on Discord