When you use GitHub Copilot, your code snippets are processed through Microsoft Azure infrastructure. While GitHub states that code from Copilot Business and Enterprise is not used to train their models, the data still leaves your premises and is subject to Microsoft’s data handling policies.

For teams handling proprietary algorithms, customer data, or security-sensitive codebases, this creates compliance friction.

A GitHub Copilot alternative that addresses these concerns is a custom coding assistant built on private infrastructure—specifically using open-source tools like Cursor, Continue.dev, or RooCode combined with a private LLM provider like Regolo.ai.

How a Custom Coding Assistant Works

Instead of relying on a black-box service, a custom coding assistant LLM setup gives you full control over the inference pipeline. Here is the architecture:

┌─────────────────┐ ┌───────────────┐ ┌─────────────────┐

│ IDE Plugin │────▶│ Local Proxy │────▶│ Private LLM │

│ (Cursor/RooCode)│ │ (Cipher/MCP) │ │ (Regolo.ai API) │

└─────────────────┘ └───────────────┘ └─────────────────┘

│ │ │

▼ ▼ ▼

Code context Persistent memory European data

stays local (team knowledge) centers (GDPR)Code language: JavaScript (javascript)A local memory layer stores persistent context about your codebase architecture. The LLM inference happens via regolo’s API, which runs on European infrastructure with zero data retention, faster than US service.

Setting Up Regolo with RooCode

You can configure a private coding assistant in under five minutes. First, install the Cipher memory server globally:

npm install -g @byterover/cipherCode language: JavaScript (javascript)Create a configuration file (cipher.yml) pointing to Regolo.ai’s API:

llm:

provider: openai

model: Llama-3.3-70B-Instruct

apiKey: $REGOLO_API_KEY

baseURL: https://api.regolo.ai/v1

embedding:

provider: openai

model: gte-Qwen2

apiKey: $REGOLO_API_KEY

baseURL: https://api.regolo.ai/v1

Connect RooCode via the MCP (Model Context Protocol) server configuration in VS Code:

{

"mcpServers": {

"cipher": {

"type": "stdio",

"command": "cipher",

"args": ["--mode", "mcp"],

"env": {

"REGOLO_API_KEY": "YOUR_REGOLO_KEY_HERE"

}

}

}

}Code language: JSON / JSON with Comments (json)Your coding assistant now has persistent memory—remembering architectural decisions across sessions—while keeping all code context local or within your private vector store.

Code with Privacy

| Privacy Factor | GitHub Copilot | Custom + Regolo |

|---|---|---|

| Data retention | Configurable by tier | Zero retention policy |

| Training data usage | Opt-out available | Never used for training |

| Geographic jurisdiction | US (Azure) | EU Data Centers |

| GDPR compliance | Yes | Full GDPR compliance |

| On-premise option | No | No |

| Code context leaves IDE | Yes | Yes with local setup |

A custom coding assistant LLM approach offers deeper flexibility:

- Persistent memory: Cipher stores architectural decisions, coding standards, and bug fix history in a vector database that the agent can query across sessions

- Multi-model selection: Switch between Llama-3.3 for reasoning, Qwen for multilingual code, or specialized models for specific tasks

- Team-shared knowledge: Point Cipher to a shared Postgres or Qdrant instance so every developer’s agent respects the same organizational rules

.rooignorefiles: Exclude sensitive files from ever being processed by the AI

This level of customization is impossible with Copilot’s closed architecture. When your team adopts a new pattern—”always use Repository Pattern for database access”—you document it once in Cipher, and every developer’s assistant immediately knows the rule.

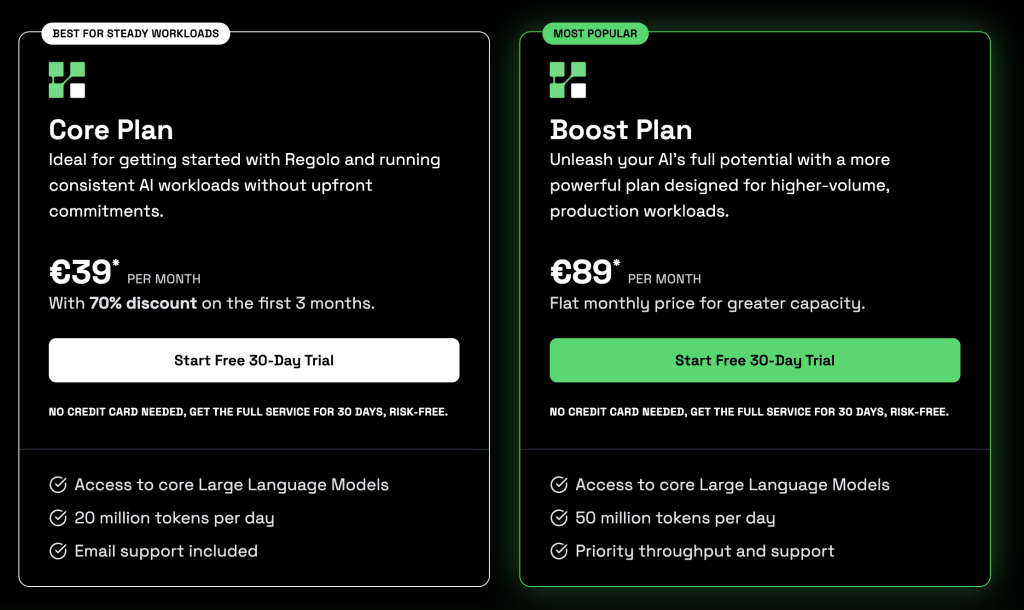

Competitive Price

Regolo offers a Core Plan with 20 million tokens per day and a Boost Plan with 50 million tokens per day, with priority throughput. For teams with moderate AI usage, this can be significantly more cost-effective—especially when combined with the ability to run smaller models locally for simple completions.

The current price of Github Copilot is based on request number not tokens… so we approximately build the following comparative table prices based on the Qwen3-Coder-Next model.

The input-to-output token ratio, for the following example with Qwen3-Coder-Next is 10:1 to 20:1, meaning context windows dominate costs:

Total Cost = (Input_Tokens/1M × €0.50) + (Output_Tokens/1M × €2.00)Costs: Regolo (Qwen3‑Coder‑Next) vs Copilot Pro / Pro+

| Profile | Monthly Requests | Regolo Qwen3‑Coder‑Next (€/month) | Copilot Pro (requests modelled as all premium, $/month) | Copilot Pro+ (same assumption, $/month) |

|---|---|---|---|---|

| Light User | 1,000 | €1.30 | $38 (300 included, 700 × $0.04) | $39 (1,500 included, no overage) |

| Medium User | 2,400 | €5.40 | $94 (300 included, 2,100 × $0.04) | $75 (1,500 included, 900 × $0.04) |

| Heavy User | 5,000 | €19.00 | $198 (300 included, 4,700 × $0.04) | $179 (1,500 included, 3,500 × $0.04) |

| Power User | 8,000 | €57.60 | $318 (300 included, 7,700 × $0.04) | $299 (1,500 included, 6,500 × $0.04) |

Realistic Team Scenarios (10 Developers)

Scenario A: Balanced Team (Most Common)

Team composition: 4 Light + 4 Medium + 2 Heavy

| Metric | Regolo.ai | Copilot Individual | Copilot Business | Copilot Enterprise |

|---|---|---|---|---|

| Monthly Cost | €63.60 | $100 (~€92) | $190 (~€175) | $390 (~€359) |

| Cost per dev | €6.36 | ~€9.20 | ~€17.50 | ~€35.90 |

| Savings vs Enterprise | €295.40/month | — | — | — |

Scenario B: Senior-Heavy Team

Team composition: 2 Light + 3 Medium + 4 Heavy + 1 Power User

| Metric | Regolo.ai | Copilot Business | Copilot Enterprise |

|---|---|---|---|

| Monthly Cost | €147.50 | $190 (~€175) | $390 (~€359) |

| Cost per dev | €14.75 | ~€17.50 | ~€35.90 |

| Savings vs Enterprise | €211.50/month | — | — |

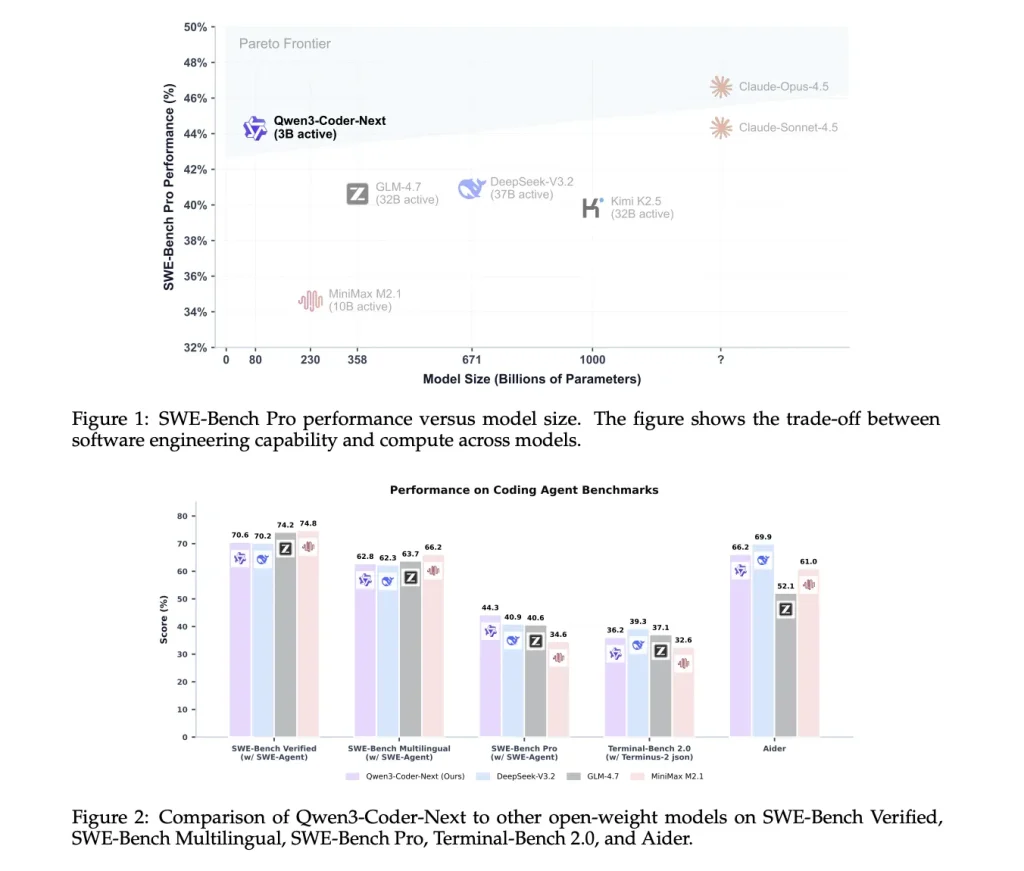

Performance in Non-English Languages

Most LLMs are trained predominantly on English data, which can produce suboptimal results for codebases with comments, variable names, or documentation in other languages.

Regolo.ai provides access to models like Llama-3.3-70B-Instruct and Qwen variants, which have strong multilingual capabilities. These models were trained on more diverse language distributions than the base models Copilot uses (which are primarily OpenAI GPT models).

For development teams in Europe, Asia, or Latin America, this means:

- Better code completion for variables and functions named in local languages

- Improved understanding of non-English comments and documentation

- More accurate generation of locale-specific logic (date formats, currency handling, etc.)

Verifiable benchmark data comparing Copilot and Regolo.ai specifically for non-English coding tasks is not publicly available. Teams should conduct their own evaluations with their specific language requirements.

- Regolo Discord – Share your thoughts

- GitHub Repo – Code of blog articles ready to start

- Follow Us on X @regolo_ai

- Open discussion on our Subreddit Community

🚀 Ready to scale?

Built with ❤️ by the Regolo team. Questions? support@regolo.ai or chat with us on Discord