Real-time AI applications live and die by latency. When a user sends a message to your chatbot or speaks to your voice assistant, they expect a response in milliseconds — not seconds.

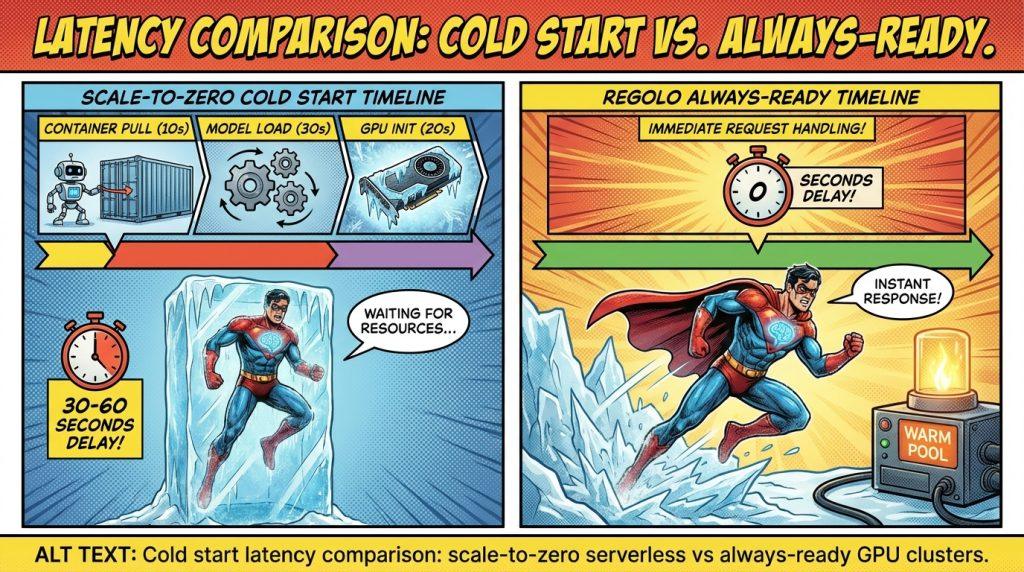

But here is the brutal reality developers are discovering: “serverless” GPU deployment, despite its promise of cost-efficiency, often introduces catastrophic cold start delays of 30 to 60 seconds when scaling from zero. This single technical limitation renders the approach unusable for interactive AI applications.

The problem is not theoretical, but real and the production environments running serverless LLM inference report cold start times exceeding 40 seconds just to produce the first token, while subsequent inference takes only ~30ms per token. This 1000x latency gap between cold and warm states creates an unacceptable user experience for chatbots, voice AI, and any real-time generative application.

If you are tired of choosing between cost optimization and user experience, Regolo offers high-performance NVIDIA H100/A100/L40S GPU clusters with low-latency inference—no cold start surprises.

The Scale-to-Zero Promise (and the Latency Trap)

Scale-to-zero architecture sounds like a dream for AI infrastructure. When no requests are coming in, your GPU resources scale down to zero—eliminating idle costs. When traffic returns, the system automatically provisions a new container, loads your model, and starts serving inference.

For batch processing, background jobs, or internal tools with sporadic usage, this model works well.

But for real-time applications—customer-facing chatbots, voice AI agents, live copilots, or interactive coding assistants—scale-to-zero becomes a latency nightmare.

The core issue: spinning up a GPU container, initializing the ML framework, loading multi-gigabyte model weights into GPU memory, and warming up CUDA graphs can take 30 to 60 seconds. In a world where users abandon applications after 3 seconds of waiting, this is a death sentence for user engagement.

The Anatomy of a Cold Start

Research on production serverless LLM platforms reveals exactly where those 30-60 seconds disappear. A cold start involves several sequential stages, each contributing to the delay:

1. Container creation and image pulling (10-30 seconds)

Serverless platforms must pull your container image from a registry. For LLM workloads, these images are massive—often 8+ GB—due to dependencies, CUDA libraries, and runtime environments. Network bandwidth and registry contention make this stage the dominant source of latency.

2. Model weight loading (2-10 seconds)

Once the container runs, the model weights must transfer from storage to GPU memory. For a 7B parameter model at FP16 precision, this means moving ~14GB of data. Even with fast local storage, this takes seconds.

3. GPU initialization and CUDA context setup (5-15 seconds)

Initializing the GPU runtime, creating CUDA contexts, and setting up the execution environment adds significant overhead. This includes allocating GPU memory pools and initializing compute kernels.

4. Framework and runtime startup (3-10 seconds)

Loading PyTorch, TensorRT, or vLLM, initializing the inference engine, and warming up the model execution graph takes additional time.

5. KV cache and CUDA graph initialization (variable)

For optimized inference, systems pre-allocate KV cache memory and compile CUDA graphs. While recent techniques like state materialization can reduce this, it remains a contributor to cold start times.

The result: in production environments, a cold-start instance produces the first token after more than 40 seconds, while warm instances generate tokens in ~30ms. This gap makes scale-to-zero unusable for latency-sensitive applications.

Solutions to the Cold Start Issue

The industry is developing several approaches to bridge the latency gap. Here are the most promising strategies:

1. Multi-tier checkpoint loading and local caching

Advanced systems like ServerlessLLM implement multi-tier checkpoint loading that leverages underutilized GPU memory and local storage to reduce startup times by 6–8x. By keeping model weights cached locally on GPU nodes and loading only the necessary layers on-demand, cold starts can drop from 40 seconds to under 5 seconds.

2. Pre-initialized containers and warm pools

Some platforms maintain a pool of pre-initialized containers with models already loaded. When a request arrives, the system assigns a warm container rather than creating a new one. This approach sacrifices some cost efficiency for predictable latency—often the right trade for real-time applications.

3. Container image optimization

Reducing container image size through multi-stage builds, removing unnecessary dependencies, and using lightweight base images can cut pull times significantly. Every gigabyte removed from the image saves seconds during startup.

4. GPU memory sharing and live migration

Techniques like GPU memory sharing and live inference migration allow workloads to move between GPUs without full cold starts. This enables better resource utilization while maintaining responsiveness.

5. Intelligent autoscaling with predictive warming

Advanced autoscaling systems can predict traffic patterns and pre-warm containers before demand spikes. By analyzing historical request patterns, the system scales from zero to ready before the first user request arrives.

Our Approach: always-Ready, Low-Latency GPU Inference

Tired of choosing between cost optimization and user experience? We eliminates the cold start dilemma entirely by offering dedicated, always-ready GPU infrastructure designed for production AI workloads.

Here is how we solves the scale-to-zero crisis:

- No Cold Starts: our NVIDIA H100/A100/L40S GPU clusters are always ready to serve inference. No container spin-up delays, no model loading waits—just consistent sub-second response times for your real-time applications.

- Predictable Latency: with low-latency inference optimized for European data centers, we delivers the responsiveness chatbots and voice AI require—without the 30-60 second surprises of scale-to-zero platforms.

- Pay-As-You-Go Without Compromise: unlike traditional always-on GPU rentals that charge for idle time, we offers flexible scaling that maintains warm capacity for your traffic patterns—balancing cost efficiency with latency guarantees.

- EU Data Sovereignty: your inference runs on European infrastructure with GDPR compliance and zero data retention—no cross-border latency penalties or compliance risks.

- Container Caching Ready: for teams needing custom environments, we supports optimized container deployments with fast boot sequences and local caching—ensuring your specific models and dependencies load instantly.

Comparison: Scale-to-Zero vs. Regolo

When Use Serverless

- Batch inference jobs (document processing, content generation)

- Internal tools with sporadic usage (weekly reports, monthly analysis)

- Development and testing environments

- Non-interactive background tasks

When Avoid Serverless

- Customer-facing chatbots and virtual assistants

- Real-time voice AI and transcription

- Interactive coding copilots

- Live gaming and interactive entertainment

- Any application where first-token latency impacts user retention

If your application appears in the “avoid” list, you need always-ready infrastructure—or at minimum, a platform with sophisticated container caching and predictive warming that keeps p95 latency under 1 second.

Best Practices for Minimizing Cold Starts

If you must use scale-to-zero infrastructure, here are techniques to mitigate cold start pain:

1. Optimize container images

- Use multi-stage Docker builds to minimize image size

- Remove unnecessary dependencies and development tools

- Choose lightweight base images (Alpine or Distroless)

- Pre-download models into the image rather than pulling at runtime

2. Implement aggressive caching

- Cache model weights on local SSDs or GPU memory

- Use tiered storage (NVMe → RAM → GPU VRAM) for fastest access

- Implement request coalescing to avoid redundant model loads

3. Use minimum replica counts

Configure your deployment to maintain at least one warm replica—even if it costs more. The improved user experience typically justifies the expense for production workloads.

5. Consider CPU fallback for initial requests

Some systems implement a hybrid approach: serve the first request from CPU while the GPU warms up, then migrate to GPU for subsequent tokens. This is complex but can mask cold start delays.

However, these workarounds add operational complexity. For most teams, the simpler solution is choosing infrastructure designed for low-latency inference from the ground up.

Frequently Asking Questions

What is cold start latency in serverless GPU?

Cold start latency is the delay between receiving an inference request and producing the first response when no GPU resources are currently allocated. It includes container creation, model loading, and GPU initialization—often totaling 30-60 seconds.

Why does scale-to-zero cause cold starts?

When traffic drops to zero, serverless platforms release GPU resources to save costs. The next request must re-initialize everything—container, model weights, CUDA context—causing significant delays.

How long do cold starts typically last for LLM inference?

Production measurements show cold starts exceeding 40 seconds for LLM serving, compared to ~30ms per token for warm instances.

Are there serverless GPU platforms without cold starts?

Some platforms advertise “pre-warmed” instances or maintain warm pools to reduce cold starts, but true zero-latency requires either always-on resources or sophisticated predictive scaling.

What applications are most affected by cold start latency?

Real-time applications like chatbots, voice AI, live coding assistants, and interactive gaming are severely impacted. Users expect sub-second responses; 30-60 second delays feel like service failures.

How can I reduce cold start times?

Optimize container images, implement local model caching, maintain minimum replica counts, and use predictive scaling. However, the most reliable solution is always-ready infrastructure.

Is container caching effective for reducing cold starts?

Yes. Multi-tier caching (local SSD, RAM, GPU memory) can reduce cold start times by 6-8x, bringing 40-second delays down to 5-10 seconds. However, sub-second response times still require pre-warmed resources.

Does Regolo have cold start latency?

No. Regolo’s GPU clusters are always ready to serve inference with consistent sub-second latency — no scale-to-zero delays or container initialization waits.

The scale-to-zero cold start crisis represents a fundamental tension in AI infrastructure: cost optimization versus user experience. While serverless GPU promises efficiency, the 30-60 second cold start delays it introduces make it unsuitable for real-time AI applications—chatbots, voice AI, and interactive assistants that demand immediate responses.

Eliminate cold start latency from your AI roadmap. Start your 30-day free trial now: https://regolo.ai/pricing or join in our Discord channel to ask our team how to deploy your application with in our data centers.

🚀 Ready? Start your free trial on today

- Discord – Share your thoughts

- GitHub Repo – Code of blog articles ready to start

- Follow Us on X @regolo_ai

- Open discussion on our Subreddit Community

Built with ❤️ by the Regolo team. Questions? regolo.ai/contact or chat with us on Discord