AI is becoming part of everyday products, but every prompt, completion, and agent workflow has a real infrastructure cost.

The public debate has shifted hard from training alone to the ongoing environmental impact of inference, because data center electricity demand is projected to more than double to about 945 TWh by 2030, with AI as a major driver.

That shift matters for builders. A single request may look negligible, yet inference runs at enormous scale, and that is exactly why energy use, water consumption, and carbon emissions are now central topics in the AI conversation.

This is where measurement becomes practical, not philosophical. Eugenio Petullà (Regolo’s team) created ai-footprint, an NPM module designed to help developers estimate the environmental impact of their AI inference workloads, turning sustainability from a vague concern into something you can track and improve.

If you are building LLM features today, the goal is not to stop using AI. The goal is to measure what your application consumes, optimize what you can control, and choose infrastructure that aligns performance with lower environmental impact, including providers like Regolo that deliver inference powered by 100% green energy.

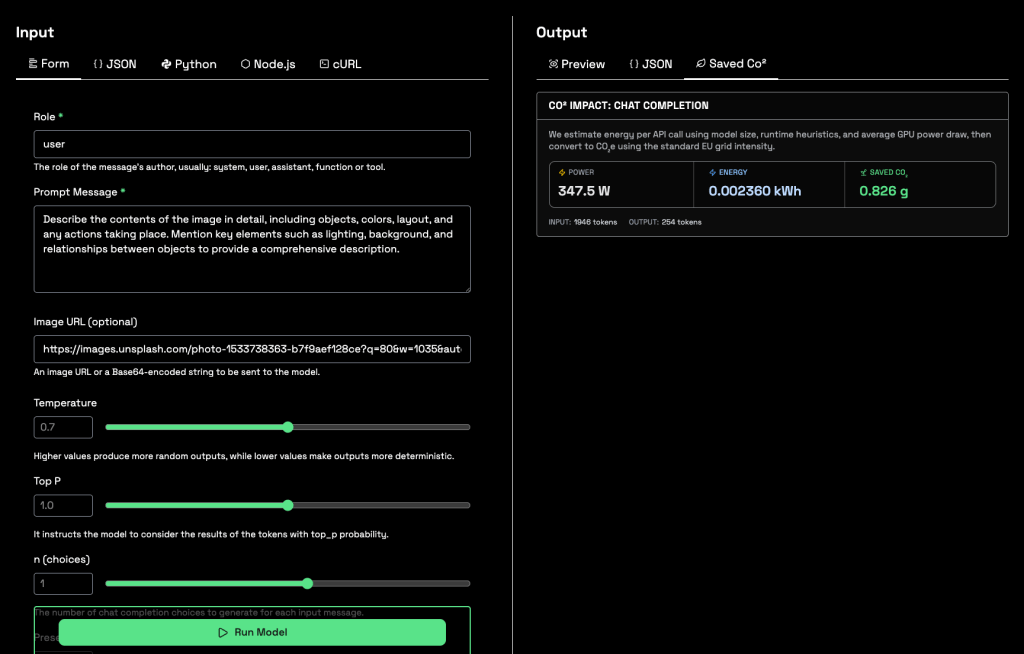

Below an implementation of this modulo in the Playground where it shows the carbon footprint saved at each inference 👇

Why AI footprint matters now

The urgency is no longer theoretical. The International Energy Agency’s 2025 reporting says global data-center electricity use is set to more than double by 2030, reaching roughly 945 TWh, and AI-optimized data centers are expected to be a key source of that growth.

In the United States, the same IEA analysis says data centers are on track to account for more than 20% of total electricity demand growth through 2030, while sector demand was estimated at around 415 TWh in 2024.

That is why the conversation on X has become more intense around LLM inference, not just model training. The common public framing is simple: per-query impact may be small, but scale changes everything.

For companies shipping AI products, this creates three business realities:

- Customers increasingly ask for transparency around emissions and infrastructure choices.

- Sustainability teams need metrics, not marketing claims.

- Product and engineering teams need a way to compare architectural decisions in operational terms.

Without measurement, these trade-offs stay invisible. With measurement, they become manageable.

What “AI footprint” actually includes

When people talk about AI footprint, they often mix different layers together. In practice, it helps to separate four components.

1. Electricity use

Electricity is the most discussed dimension because inference runs continuously and directly affects grid demand. The IEA projects data-center electricity demand at about 945 TWh by 2030, more than double today’s level.

2. Carbon emissions

Carbon depends on how that electricity is produced. The same workload can have very different emissions depending on the local grid mix, the efficiency of the hardware, and when the workload runs.

3. Water consumption

Water is increasingly part of the discussion because data centers use it directly or indirectly for cooling and power generation. A University of California, Riverside report on the early water-footprint research found that training GPT-3 in Microsoft’s U.S. data centers consumed about 700,000 liters of freshwater, and the related paper estimated total water footprint at 5.4 million liters when broader factors are included.

4. Infrastructure efficiency

Not all compute is equal. Hardware generation, rack design, cooling systems, model architecture, precision format, and utilization rates can dramatically change the footprint of the same user-facing task. NVIDIA says its Blackwell platform can deliver up to 25x better energy efficiency for certain inference workloads, while also improving water efficiency in liquid-cooled systems.

This is why raw model quality is not the only KPI that matters anymore. Responsible AI operations also depend on how efficiently that quality is delivered.

Why measurement tools matter

You cannot reduce what you do not measure. That is the real value of an NPM module like ai-footprint: it gives developers a way to connect AI usage to environmental cost, instead of treating sustainability as an abstract CSR topic.

A good AI footprint workflow helps teams answer practical questions such as:

- How much impact does one completion create?

- How does a larger model compare with a quantized or distilled alternative?

- What happens when we increase context length or output tokens?

- Is our RAG pipeline causing unnecessary inference overhead?

- How much could we save by moving to more efficient infrastructure?

For engineering teams, this becomes a feedback loop. Once environmental metrics are visible, they can be optimized like latency, uptime, or cost.

That is especially useful because many of today’s biggest gains come from efficiency, not sacrifice. Recent benchmarking and industry reporting consistently point to lower-precision inference, better scheduling, improved cooling, and newer hardware as major levers for reducing impact.

Where Regolo fits

Regolo has a strong positioning angle here because the company can connect three messages that belong together:

- Measurability, through ai-footprint and transparent estimation.

- Operational performance, through production-grade LLM inference.

- Sustainability, through 100% green energy-powered inference.

That combination matters because many teams do not want a false choice between speed and sustainability. They want low latency, scalable inference, European data residency, privacy, and a greener infrastructure model in one platform.

A useful framing for the article is this: measuring footprint helps you improve your software decisions, while choosing a greener inference provider helps you improve your infrastructure baseline.

Want to build AI products with better visibility into their environmental impact?

Start with footprint measurement, then run inference on infrastructure designed for performance, privacy, and cleaner energy through our infrastructure.

Github Codes

You can download the codes on our Github repo. If need help you can always reach out our team on Discord 🤙

Resources

- Discord – Share your thoughts

- GitHub Repo – Code of blog articles ready to start

- Follow Us on X @regolo_ai

- Open discussion on our Subreddit Community

Built with ❤️ by the Regolo team. Questions? regolo.ai/contact or chat with us on Discord