When we talk about multi-agent AI in banking and insurance, we are really talking about splitting a heavy, regulated workflow — think KYC, AML, sanctions screening, suspicious activity reporting — into a small team of specialized agents that coordinate, leave a traceable record, and keep a human in the loop where it matters.

Done well, this pattern cuts review time on low-risk cases, concentrates analyst attention on the genuinely ambiguous ones, and produces the kind of audit trail that regulators expect under the EU AI Act from August 2026 onward.

Why this matters now

Compliance in banks and insurers is a resource-intensive function spread across three lines of defense — front office, risk, and internal audit — and each layer traditionally operates in isolation, creating blind spots and duplicate work. At the same time, AML obligations in the EU keep expanding: customer due diligence, PEP and sanctions screening, continuous transaction monitoring and suspicious activity reporting all become heavier year over year.

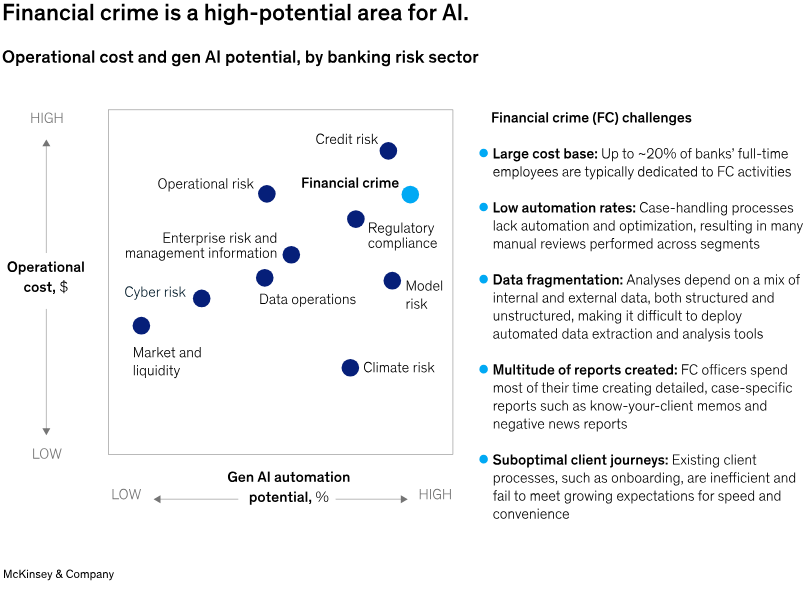

Credits to McKinsey & Company

McKinsey quantifies the scale of the problem, according to Interpol data cited in McKinsey’s 2025 analysis on agentic AI in financial crime, the financial industry detects only about 2% of global financial crime flows, despite spending on AML rising by up to 10% a year in some advanced markets between 2015 and 2022. Incremental tooling is no longer moving that number; a structural change in how work is organised is needed.

The regulatory clock is also tight – the EU AI Act’s high-risk regime becomes fully enforceable on 2 August 2026

Annex III explicitly classifies AI used to evaluate creditworthiness and to price life and health insurance as high-risk, with fines up to 35 million euros or 7% of global annual turnover. As Freshfields notes in its AI-in-finance briefing, “the extensive obligations applicable to high-risk AI systems used for some purposes in relation to life and health insurance or for creditworthiness evaluation or scoring will be among the most significant issues for many firms”.

Deloitte reaches the same conclusion on scope, underlining that life and health insurance risk-assessment systems “if not properly designed, can lead to serious consequences for people’s lives and health, including financial exclusion and discrimination”.

The cumulative picture is sharper once you stack the frameworks. Rasa’s 2026 guidance for financial institutions puts it plainly: a large bank could face “AI Act fines, GDPR fines, DORA penalties, and NIS2 sanctions related to cybersecurity simultaneously for overlapping failures”. That is the real governance surface teams have to design for — not a single regulation, but a stack.

The market is already moving

This is not a forecast; adoption inside the big incumbents is already visible. Accenture’s banking research, covered by Banking Dive in January 2026, found that 57% of banking executives expect AI agents to be fully embedded in risk, compliance and audit functions, as well as in fraud detection and transaction monitoring, within the next three years, while 56% see broad adoption in credit assessment, loan processing and KYC. Accenture’s own recommendation to CIOs is telling: enable real-time monitoring and telemetry of agent activity, implement “multiagent validation for sensitive tasks,” and establish “an agent identity framework enabling authentication, authorization and permission”.

Gartner’s reading is consistent. Its 2026 ranking of decision intelligence platforms highlights that vendors serving financial services are converging on the same capability set — “modeling, orchestration, monitoring, and governance” combined with generative and agentic AI — with explainability and model governance treated as non-negotiable features rather than add-ons. On the risk side, Gartner has publicly projected that 40% of agentic AI projects will be cancelled by 2027, a reminder that governance weakness — not model quality — is what typically kills these programs.

Where a single monolithic model falls short

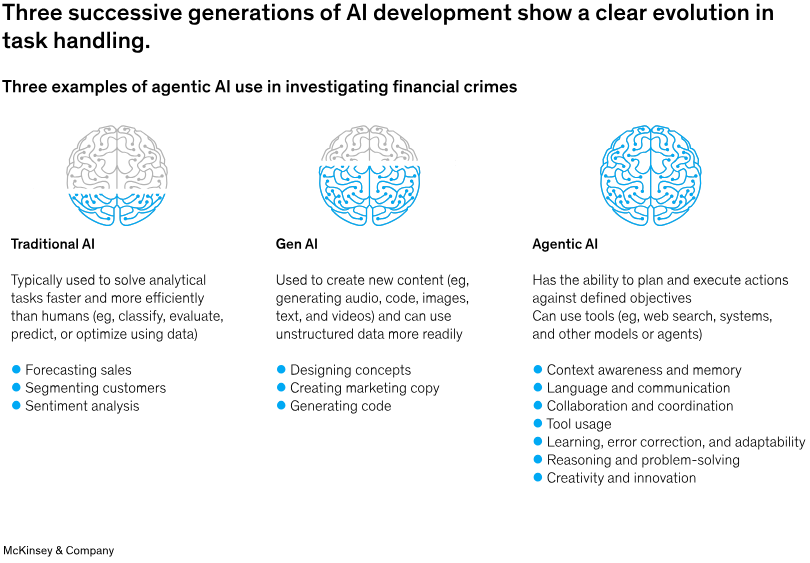

A single LLM answering “is this transaction suspicious?” behaves like a black box: it conflates document extraction, watchlist lookup, risk scoring and narrative writing in one opaque pass. That makes it hard to explain, hard to test, and hard to govern.

A multi-agent system breaks the same problem into smaller roles, each with a narrow responsibility, a clear input/output contract, and its own log line. McKinsey describes exactly this shape in its KYC case study: an “agentic AI factory” with “ten agent squads” where each squad contains “four or five AI agents, including a lead agent, two or three expert-practitioner agents, and a quality assurance (QA) agent,” producing “a full audit trail for every agent interaction, including data used, steps followed, agent conversations, rationales for conclusions, and observations by QA, compliance, and audit agents”. That audit trail is the reason this architecture tends to survive a regulator’s scrutiny, while a single-prompt copilot typically does not.

Credits to McKinsey & Company

A reference architecture for KYC/AML

A pragmatic blueprint, consistent with McKinsey’s agentic KYC factory and Accenture’s guidance on agent identity and multiagent validation, looks like this:

- Orchestrator agent receives an onboarding or alert event and routes work, holding case state and writing audit events.

- KYC/due diligence agent extracts identity fields from documents and cross-checks registries.

- Sanctions & PEP screening agent matches names and entities against watchlists, with deterministic rules on top of the LLM to contain false positives.

- Transaction monitoring agent evaluates flows against typologies and the customer risk profile in near real time.

- Risk scoring agent aggregates signals into a band and produces a short rationale.

- SAR drafting agent assembles a draft suspicious activity report with references to the underlying evidence.

- QA agent (per McKinsey’s pattern) checks that each upstream agent completed its task to the required standard.

- Human-in-the-loop checkpoint lets a compliance officer approve, reject or escalate, and the decision is logged alongside the agent chain.

Accenture’s guidance applies neatly on top: each agent needs its own identity, scoped permissions, and real-time telemetry, while the orchestrator enforces multiagent validation before any sensitive action completes.

The control conversation, not the productivity one

A useful reframing comes from industry commentary collecting OCC, IBM and BIS 2025 positions: AI in financial institutions “is no longer only a productivity conversation. It is a control conversation”. The same analysis warns that “generic copilot-style tools often disappoint” in compliance environments because they do not automatically create “evidence-linked outputs, controlled routing, retained provenance, governed change management” — which are precisely what auditors and supervisors ask to see.

Deloitte’s own work with European financial services aligns with this: the AI Act’s focus on high-risk systems forces firms to treat AI as “a digital component of products” that must meet safety, transparency and fundamental-rights standards, not as a productivity tool bolted on the side. Accenture, in its Intelligent Compliance report, makes the same point from the operational angle, stressing that compliance functions must “adopt advanced technologies such as AI, robotic process automation (RPA) and regulatory technology (RegTech) to simplify and automate compliance and controls testing” — but only once data quality and standardisation issues, which remain “significant barriers to progress,” are addressed.

Where we fit

For a European bank or insurer, the decisive question is not only “which agent framework do we use?” but “where does the inference actually happen, and under which jurisdiction?”. This is where we come in.

We run GPU inference on open models from data centers under EU jurisdiction and GDPR, with zero data retention on inference content and renewable-energy-powered infrastructure. For a multi-agent compliance workflow, that translates into a few concrete properties:

- Data sovereignty by default. Customer identity documents, transaction descriptions and draft SAR narratives never leave EU infrastructure, which helps when a supervisor asks where personal data is processed.

- Zero retention at the inference layer. Prompts and completions are not stored on our side; the authoritative record is the audit log the institution keeps under its own control, matching the evidence-retention expectations described in the OCC/IBM/BIS synthesis.

- GPUs for bursty workloads. AML monitoring is spiky — batch re-screening, end-of-month reviews, surges after a sanctions update — and scale-to-zero removes idle GPU spend between peaks.

- OpenAI-style API on open models. Teams can swap models per agent (a lean one for extraction, a stronger one for SAR narratives) without rewriting the stack, keeping Accenture’s agent-identity and governance model intact across providers.

None of this removes the need for conformity assessment, FRIA, or human oversight. It makes the technical substrate compatible with those obligations rather than fighting them.

How this connects to the three lines of defense

Mapping agents to the bank’s control structure keeps governance clean:

| Line of defense | Role of agents | Human checkpoint |

|---|---|---|

| First line (front office) | KYC extraction, instant policy guidance | Relationship manager confirms high-risk onboarding |

| Second line (risk/compliance) | Screening, transaction monitoring, risk scoring, QA agent | Compliance officer reviews medium/high-risk cases |

| Third line (internal audit) | Read-only access to audit log, re-execution of past cases | Auditor samples cases and replays the agent chain |

The audit log is the connective tissue. If a supervisor asks how a given decision was made last Tuesday, we replay the case id, show the agent chain, the model ids, and the human approver — the McKinsey-style “full audit trail for every agent interaction”.

FAQ

Is a multi-agent setup considered a high-risk AI system under the EU AI Act?

If any agent contributes to credit scoring or to risk assessment and pricing for life and health insurance, the overall system falls under Annex III high-risk obligations from 2 August 2026, including logging, human oversight and a Fundamental Rights Impact Assessment — the same scope Freshfields and Deloitte identify as the largest single compliance issue for financial firms. Systems used only for internal productivity may fall outside that scope, but the classification must be documented rather than assumed.

Why split a workflow into multiple agents instead of using one large prompt?

Specialized agents give narrower input/output contracts, cleaner unit tests and a per-step audit trail — the pattern McKinsey documents as an “agentic AI factory” of small squads with distinct roles and an embedded QA agent. Accenture adds the governance rationale: sensitive tasks should go through multiagent validation and scoped identities, not a single opaque model.

How does Regolo.ai help with GDPR and data residency?

Inference runs on GPUs located in Italy, under EU jurisdiction, with zero data retention on prompts and completions. Customer PII used inside a KYC or AML prompt is processed within the EU and is not persisted on the inference provider’s side, which aligns with the evidence-retention and data-sovereignty concerns Rasa flags for financial institutions exposed to the AI Act, GDPR, DORA and NIS2 at the same time.

Do we still need human reviewers if the agents are well-designed?

Yes. The AI Act requires human oversight that is effective, not symbolic — a point Deloitte emphasises for high-risk systems in insurance and credit. In practice, the orchestrator blocks case closure on non-low-risk or matched-screening outcomes until a human decision is logged.

What about open models vs. closed models for this use case?

Open models hosted on EU infrastructure give two practical advantages for regulated teams: the exact model per agent can be chosen and versioned, and the data flow stays inside a known jurisdiction. Closed proprietary APIs can still be used, but most teams prefer to keep the sensitive agents (KYC, screening, SAR drafting) on an EU-hosted, zero-retention path — consistent with the control-first framing shared across recent industry analyses.

How do we start without disrupting existing compliance systems?

Run the multi-agent pipeline in shadow mode alongside current AML/KYC tooling, log both outcomes, and let compliance officers compare. Only after agreement on quality and audit completeness does the agentic path move into the primary workflow, keeping the legacy system as a fallback and a control benchmark. This sequencing matches Accenture’s recommendation to roll out agents under “a central governance model” with real-time telemetry before broadening scope