About Euraika

Euraika is a European AI governance, resilience, and compliance technology company headquartered in Belgium – building intelligent platforms that help organizations navigate GDPR, NIS2, DORA, and the Belgian CyFUN cybersecurity standard – through AI-powered guidance, automated verification, and regulatory knowledge retrieval:

Our mission is to make regulatory compliance accessible, auditable, scalable, and resilient

– Irene Personne – CEO, Euraika

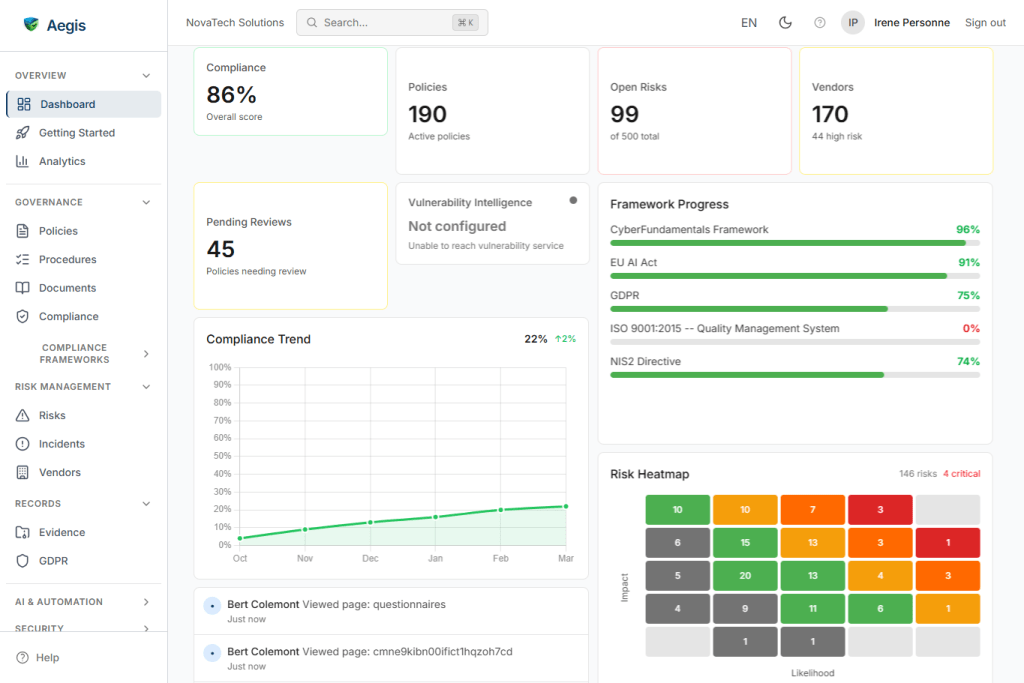

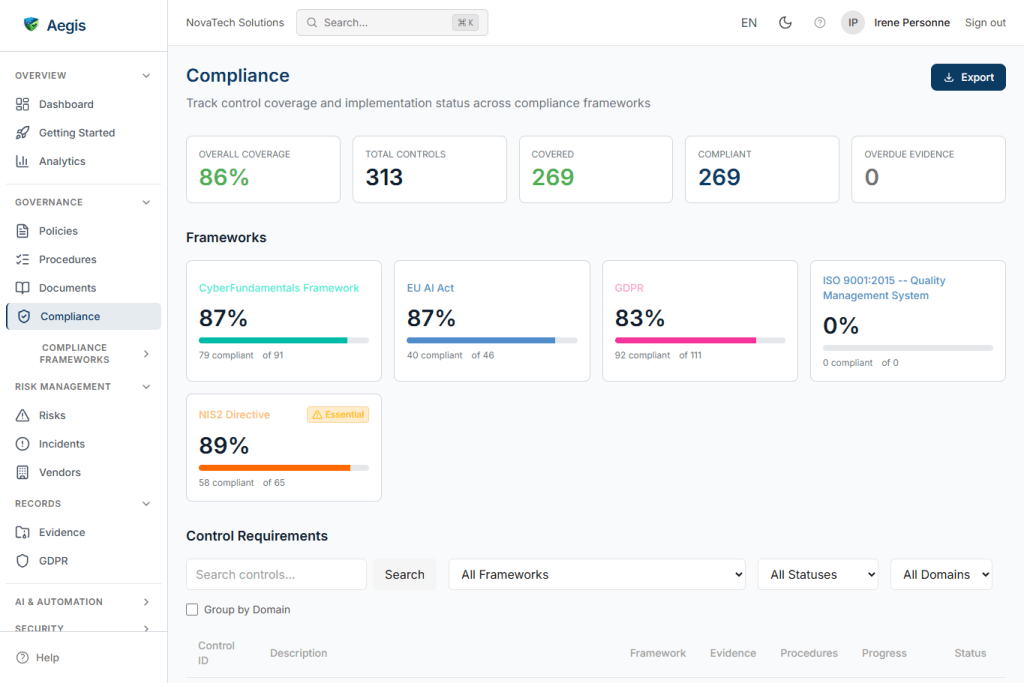

Aegis: an AI-powered compliance command center

Euraika’s flagship product, Aegis, is an AI-powered, resilience and compliance command center. It combines large language model inference with deep regulatory knowledge retrieval, multi-stage response verification, and full audit trail capabilities – delivered as a multi-tenant SaaS platform with enterprise-grade access controls.

Before partnering with Regolo.ai, we managed our own GPU inference infrastructure on Kubernetes. While this gave us control, it created significant operational overhead: capacity planning, driver management, model optimization, and around-the-clock monitoring. These demands pulled engineering time away from our core value — the compliance intelligence layer

Regolo eliminated GPU infrastructure management while scaling our compliance platform to production, serving enterprise customers with fast response times and full regulatory auditability.

Initial Pain Points

- GPU infrastructure overhead: managing self-hosted inference backends required constant attention to hardware compatibility, capacity planning, and model optimization pipelines.

- Scaling limitations: autoscaling GPUs make easy to guarantee consistent performance.

- Slow model iteration: every model update required optimization, testing, and redeployment — turning what should be a configuration change into a multi-day process.

- Cost unpredictability: reserved GPU capacity meant paying for idle compute during off-peak hours, while peak demand occasionally exceeded available resources.

- Engineering distraction: our small team was splitting focus between compliance AI development and GPU infrastructure operations.

Impact on Operations

These challenges directly slowed our product roadmap. Every new compliance capability required infrastructure provisioning. Pipeline improvements were bottlenecked by GPU availability. Our time-to-market for new regulatory frameworks was measured in weeks rather than days.

Most critically, the operational burden made it difficult to offer reliable SLAs to enterprise customers who expected consistent latency and uptime from a compliance platform.

Solution Implementation

- OpenAI-compatible API: Regolo.ai’s standard API endpoints meant zero changes to our existing gateway architecture. Drop-in replacement with no protocol translation needed.

- Large model access: Ability to use powerful, high-parameter-count models without any GPU provisioning or optimization on our side — models that would have required multi-GPU setups locally.

- Embedding support: High-quality embedding models powering our entire regulatory knowledge retrieval pipeline.

- Streaming support: Full Server-Sent Events streaming, enabling real-time compliance chat with fast time-to-first-token.

- Usage-based billing: Per-token pricing that directly correlates with customer consumption, enabling transparent per-tenant cost attribution.

We integrated Regolo.ai through a lightweight proxy service running inside our Kubernetes cluster. It handles authentication, timeout management, and usage logging — all in a minimal footprint.

Our platform routes multiple inference tasks through Regolo — primary compliance chat, response verification, and complex case escalation — each mapped to the most appropriate model for the task. The gateway transparently handles model routing, so our compliance pipeline references functional roles rather than specific model names, making model swaps a simple configuration change.

The entire integration layer runs on negligible compute resources — a dramatic reduction compared to our previous GPU backends.

The Migration Process

The migration from self-hosted GPU backends to Regolo.ai was completed in under a week:

- Days 1–2: Built a lightweight proxy service to handle authentication and request forwarding. The OpenAI-compatible API meant no protocol translation.

- Day 3: Configured routing from our existing logical service names to the Regolo-backed proxy. Our gateway’s aliasing feature handled the translation transparently.

- Day 4: Ran our automated quality benchmarks against the Regolo-backed pipeline. Retrieval quality, verification accuracy, and citation extraction all met or exceeded our previous baselines.

- Day 5: Deployed to production via our standard GitOps pipeline. Zero downtime. Decommissioned GPU backends.

Measurable Results

| Metric | Improvement |

| Infrastructure compute costs | ~60% reduction in off-peak months |

| Model deployment time | From days to under an hour (95% faster) |

| Time-to-first-token (streaming) | ~60% faster |

| Engineering hours on infra ops | From ~30 hrs/month to ~2 hrs/month (93% reduction) |

| Service availability | Significant improvement, exceeding 99.9% |

| Accessible model capability | Access to models 3–4× larger than previously feasible |

Impact on Business

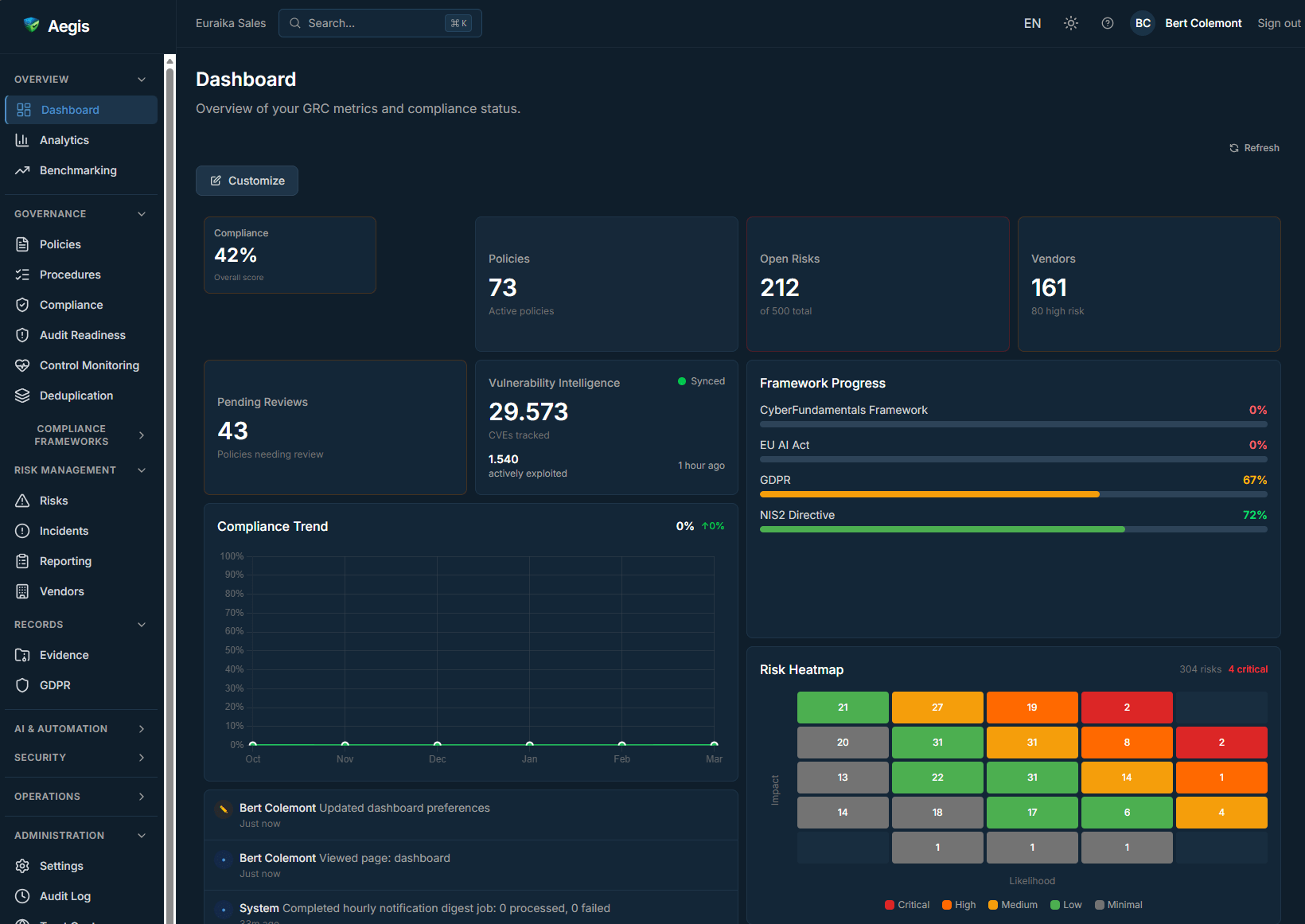

The shift to Regolo freed our engineering team to focus entirely on compliance intelligence. In the three weeks following migration, we shipped:

- Automated vulnerability enrichment — continuous sync with major vulnerability databases

- Regulatory document sync — automated ingestion of GDPR and NIS2 source texts from official EU publications

- CyFUN framework integration — Belgian cybersecurity standard added to our knowledge base

- Enterprise multi-tenancy — production-ready tenant isolation with per-tenant quotas and access controls

- Multi-stage claim verification — automated fact-checking of every compliance response against authoritative sources

None of these features required GPU infrastructure changes. Each was a pure product improvement — exactly where our engineering effort belongs.

What Euraika said about Regolo

Regolo.ai removed the single biggest distraction from our roadmap: GPU operations. We went from spending a third of our engineering capacity on model management to spending nearly zero. The inference quality is excellent for compliance use cases, and the OpenAI-compatible API meant we changed nothing in our existing architecture. For a European AI company that needs to move fast on regulatory features, having a reliable inference partner is transformative.

— Bert Colemont, Co-founder Euraika

We see regolo.ai as the inference backbone that lets us focus on what differentiates Euraika: resilience-driven compliance intelligence. Regolo handles model hosting, scaling, and serving; we handle the governance, verification, auditability, and regulatory knowledge enterprises need to stay resilient. It’s a clean separation of concerns that plays to each company’s strengths

Future Plans

- Multilingual expansion: leveraging additional Regolo-hosted models for EU-wide compliance coverage across French, German, Dutch, and Italian.

- Knowledge base scaling: as our regulatory corpus grows with new frameworks (DORA, AI Act), we expect to increase embedding throughput via Regolo.

- Continuous compliance monitoring: using Regolo inference for real-time compliance drift detection across client portfolios.

Industry Impact

Regulated industries face a fundamental tension: they need AI to manage increasingly complex compliance requirements, but they cannot compromise on auditability, data governance, or vendor transparency. Self-hosting LLMs addresses transparency but creates unsustainable operational overhead for most organizations.

Regolo.ai resolves this tension by providing European-hosted, transparent inference with known models, a standard API, and predictable billing. This lets compliance technology providers like Euraika build sophisticated governance layers without being distracted by infrastructure.

Innovation Enablement

Regolo.ai is an enabler of the emerging AI governance stack — the layer between raw inference and enterprise compliance requirements. By providing reliable, high-quality inference as a service, Regolo allows companies to invest in the differentiating layers: regulatory knowledge retrieval, automated verification, citation tracking, audit trails, and multi-tenant isolation.

Our Aegis platform — with its multi-stage verification engine, thousands of indexed regulatory provisions, and real-time streaming — would not exist in its current form without a reliable inference provider. Regolo made it possible for a small European team to build a production-grade, resilience-driven compliance AI platform that competes with organizations with 10× our resources.